Blog Details

The Importance Of Simulcast in WebRTC

- March 16, 2021

- WebRTC

- Real-time communication

- SFU

- Simulcast

- WebRTC

- webrtc app development

Multi-party video infrastructure is pretty daunting to deal with. Having said that, the technology, standards, and products enabling multiparty video in WebRTC have matured exceptionally in the past few years. Simulcast is one of the key underlying technologies enabling these changes in multiparty conferencing.

RTCWeb.in has long been dealing with clients wanting to create live broadcast solutions where the users broadcast their streams from a single session to a large audience. While we have immense proficiency in WebRTC technologies, we haven’t covered Simulcast in any of our previous blogs. In this blog, we hope you find the answers to all the questions you may have about Simulcast.

***

WebRTC technology is maturing with use cases beyond person-to-person calling, video-telephony, and enterprise conferencing. WebRTC powered applications can add real-time media communications and can be deployed across industry vertices – be it personal real-time broadcasting, healthcare, dating, or others.

The diverse blend of use cases, growing networking infrastructure, and the high number of device endpoints, pose a very interesting challenge which is – ‘quality of experience’. Media optimizations and efficient quality control has become more important than ever before in the new WebRTC environment.

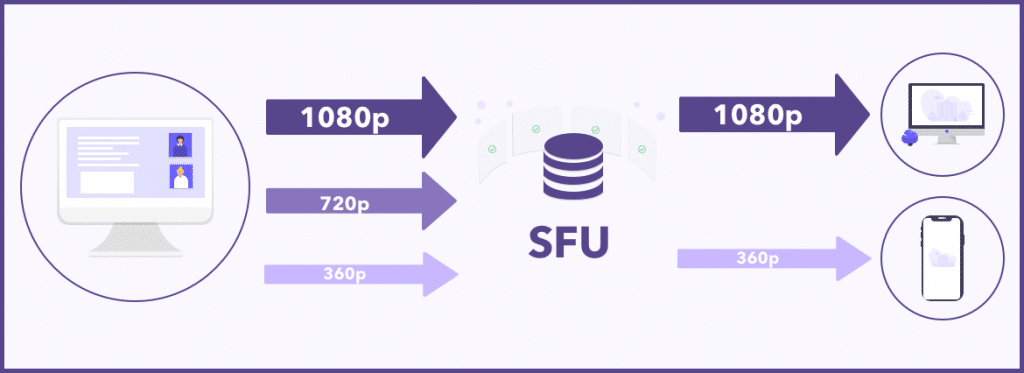

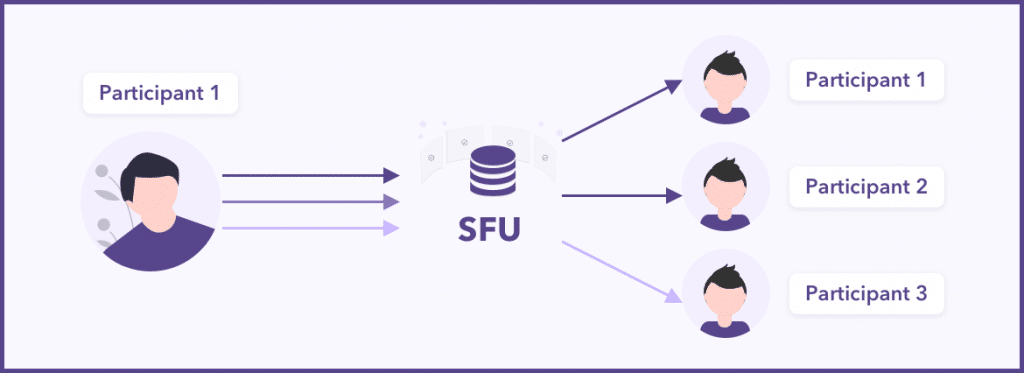

Simulcast enables WebRTC clients to encode the same video stream in different resolutions and bitrates. It allows routers to decide who receives which of the streams. The SFU decides which stream to forward and to whom.

Simulcast is one of the most interesting aspects of WebRTC, especially in the context of multiparty conferencing. In a nutshell, simulcast gives users different independent versions of the same stream in different resolutions simultaneously coming from the same endpoint.

Simulcast-based platforms encode and transmit a set of qualities (resolutions + frame-rates) ranging from minimal quality to the highest necessary quality for the same stream.

***

Video Encoding

Three modalities layer the quality of a video stream:

- Resolution

- Frame-rate

- Encoding quality (quantization)

In a multi-party call, it is not adequate to send the same resolution, frame-rate, and encoding quality to each user. The best-case scenario is to send distinct streams to each participant with one or more of these 3 dimensions independently. The right combination of layered video encoding and SFU-based multiparty video routing excludes the cumbersome cost of MCUs.

This is how every user receives a gracefully downplayed version of the original full quality stream, as per their needs and capabilities.

***

Enabling Simulcast

To benefit from simulcast support in your WebRTC platform, two things are needed-

- Have simulcast support in WebRTC endpoints like a browser.

- Build the piece of logic that allows you to select which quality to send to which receiver in your SFU.

***

Summing Up

The improvement of simulcast depends on the specific use case and things like the type of devices, networks, resolutions, and the number of receivers.

Simulcast is most beneficial when the number of people receiving the video streamed by a sender is higher.

In the video distribution without simulcast, one sends a video stream encoded for the bandwidth of the receiver with the least bandwidth. And, with more participants you have at least one user under bad network conditions. With simulcast, the common bitrate received by all endpoints is independent of the number of users. All participants can receive different qualities situated to their network and device capacities without affecting other users. This gives us an overall higher average bitrate across the platform.

More on and around Simulcast will follow in this space. If you have any questions or suggestions feel free to reach out to us.

About RTCWeb.in

We are a WebRTC development company, operating in the industry to deliver the best video conferencing solution to our clients. All our WebRTC services are client-centric and we make products that fulfill your requirements and help you achieve your business goal. Contact us now for WebRTC development services.